Introduction¶

DFACE is an offline face recognition SDK for IoT and mobile devices. It supports edge heterogeneous computing which can cover CPU, GPU, DSP, etc. The SDK includes face detection, face recognition, face tracking, RGB liveness detection, NIR liveness detection, face 98 landmarks, pose estimation, face quality score, occlusion detection, gender and age detection, etc. Module. DFACE has been widely used in industrial firefighting, smart buildings, smart education, smart store and other industries due to its powerful functions, fast and stable operation, etc. it has been highly praised by all walks of life.

Frameworks¶

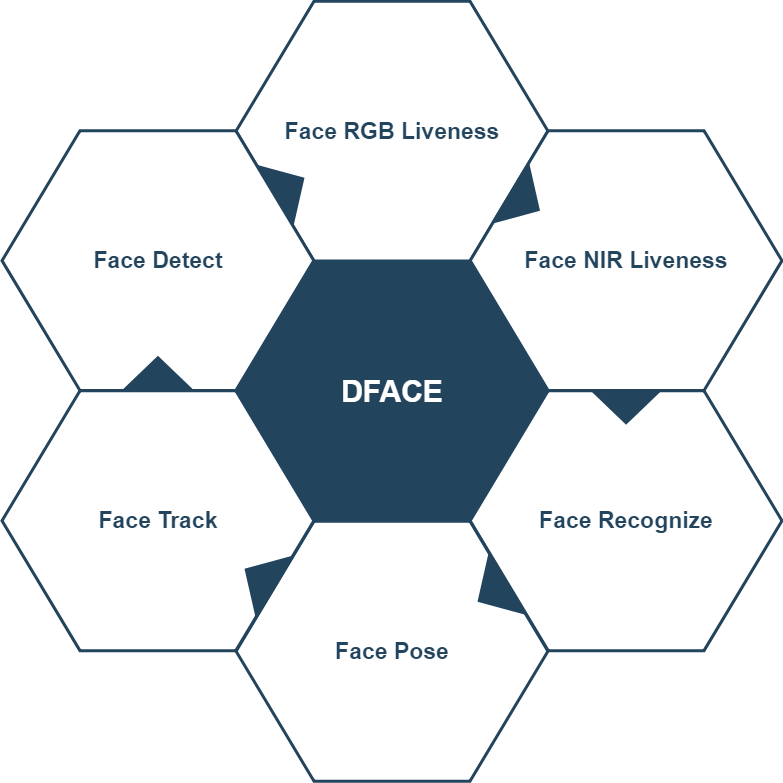

The following figure describes the basic framework of DFACE. It is mainly composed of 8 functional modules, and each module is responsible for a part of functions, such as face detection (DfaceDetect), feature extraction (DfaceRecognize), liveness detection (DfaceRGBLiveness), etc.

Technical Background¶

1.Technical Overview¶

1.Ultra-efficient deep neural network¶

There is often a balance between recognition and speed. For example, the higher the recognition accuracy, the slower the speed. We choose the best energy efficiency ratio scheme The recognition network adopts the advanced MobileNet V3 structure, and at the same time increases the proportion of Asian face training, which can maintain a high accuracy even when running on a device with poor performance.

Embedded devices are often more demanding. For example, the computing performance of ARM is average, and the memory is relatively small. We have optimized the underlying acceleration of the algorithm for ARM, and further reduced the model using compression techniques such as model pruning, distillation, and quantization, so that it can run in the embedded terminal.

2.Multi-platform support¶

Due to our end-to-end design, we can achieve the effect of multiple compilations one common source code. Currently Our SDK supports Linux, Windows, Android, IOS and other systems, supports Intel, AMD, ARM architectures.

3.Easy to use SDK¶

Because of our excellent architecture and coding skills, the SDK can easily make all platforms fast and easy Install(deploy). Facing the user layer, we use the hot swap loading mode, the user only needs to introduce the header file, no Rely on any third-party library to run our SDK. The compatibility of each platform is good, and it is easy to use.

2.Algorithm function¶

1. Face Detection¶

Face detection uses the most advanced deep learning algorithm, through the millions of pictures training. At present, it can still quickly and accurately detect and locate human faces in complex environments, and the detection rate has reached 99.97%, 4ms-20ms per frame.

2. Face tracking¶

Multi-face tracking algorithm, suitable for multi-person recognition application scenarios.

3. Face landmarks detection¶

The 98 face landmarks regression technology based on deep learning can run stably in complex environments, including Faces with the same light changes, various poses and expression changes. Different from the traditional landmarks technologies such as ASM and CLM, We searched all CNNs of the local landmarks of the face, the model is more robust, and the landmarks positioning is more accurate.

4. Face pose estimation¶

Integrate deep learning and 3D technology to intelligently determine the 3D depth information of the face in front of the camera, such as Angle, elevation angle, yaw angle, xyz, etc.

5. Feature extraction¶

Face recognition often maps different face image information to feature vectors of the same latitude, and we optimize the loss Loss function, it can be achieved that the difference of faces in the same category is small, and the difference in faces of different categories is very large, so it is very suitable Cooperate with high-precision face recognition. At present, each face feature is unified into an array of 1024 bytes, which is convenient for subsequent Face comparison.

6. Face quality score¶

Provides a face quality scoring network based on deep learning, which is very suitable for face preprocessing and avoids invalid face registration and recognition.

7. NIR liveness detection¶

The depth of the face is calculated based on the principle of binocular disparity, and the image formed by infrared reflection is analyzed at the same time, so that the liveness detection is more effective. It can resist common attack methods such as video replay and printing photos.

8. RGB liveness detection¶

The RGB silent liveness detection can be used under one normal camera, which can effectively resist video replay attacks, print photo attacks, mask attacks, etc. Relative to pass The traditional active living, RGB liveness detection user experience is better and more extensive.

9. RGB liveness + NIR liveness¶

Combining the advantages of the two liveness detection, a low-cost, high-security payment-level solution is especially suitable Payment applications and financial self-service terminal equipment.

Solution Selection¶

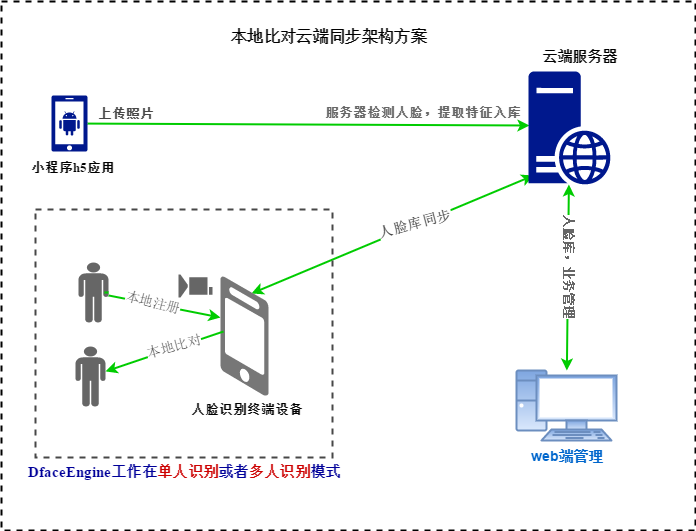

1.Local face feature extraction, local comparison¶

The local device capture face picture, detects face, liveness detect, extracts face features and compares it with the local database. The cloud is mainly used for face database management and communication synchronization with local devices.

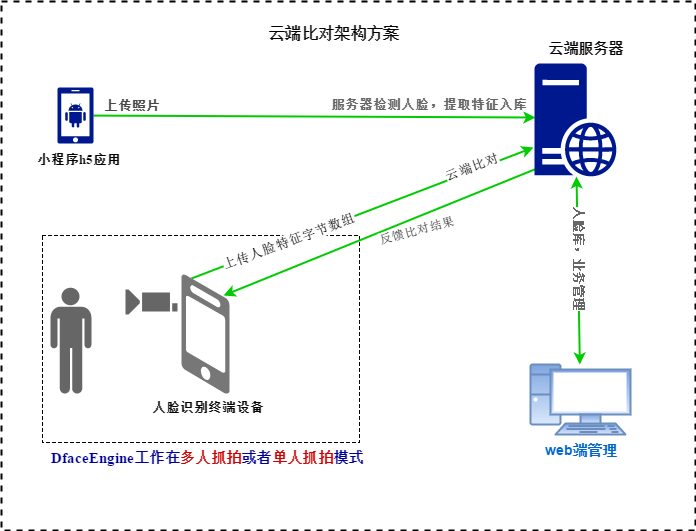

1.Local face feature extraction, Cloud Comparison¶

The local recognition device capture face picture, detects face, liveness detect, extracts face features, and uploads the feature byte array or face image to the cloud server for comparison.